1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

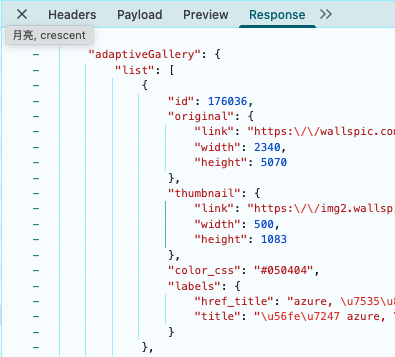

| {

"adaptiveGallery": {

"list": [

{

"id": 176036,

"original": {

"link": "https:\\/\\/wallspic.com\\/cn\\/image\\/176036-azure-dian_lan_se_de-yuan_quan-fu_hao-yi_shu",

"width": 2340,

"height": 5070

},

"thumbnail": {

"link": "https:\\/\\/img2.wallspic.com\\/previews\\/6\\/3\\/0\\/6\\/7\\/176036\\/176036-azure-dian_lan_se_de-yuan_quan-fu_hao-yi_shu-500x.jpg",

"width": 500,

"height": 1083

},

"color_css": "#050404",

"labels": {

"href_title": "azure, 电蓝色的, 圆圈, 符号, 艺术",

"title": "图片 azure, 电蓝色的, 圆圈, 符号, 艺术"

}

}

],

"resolution": null

},

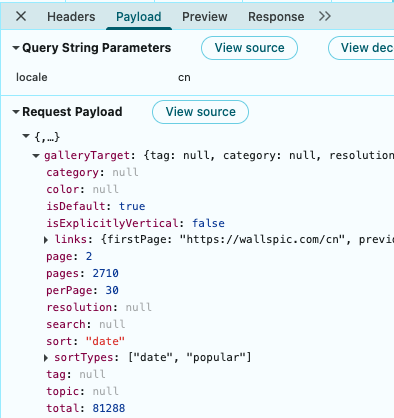

"galleryTarget": {

"tag": null,

"category": null,

"resolution": null,

"topic": null,

"color": null,

"search": null,

"sort": "date",

"page": 2,

"perPage": 30,

"total": 81288,

"isDefault": true,

"isExplicitlyVertical": false,

"pages": 2710,

"sortTypes": [

"date",

"popular"

],

"links": {

"firstPage": "https:\\/\\/wallspic.com\\/cn",

"previousPage": "https:\\/\\/wallspic.com\\/cn",

"nextPage": "https:\\/\\/wallspic.com\\/cn?page=3",

"sort": {

"date": "https:\\/\\/wallspic.com\\/cn",

"popular": "https:\\/\\/wallspic.com\\/cn\\/album\\/popular"

}

}

}

}

|